How a Hiring Platform Earned More Visibility in AI Overviews and Search Results

Inside the strategy that helped a hiring brand get found more often in both AI answers and Google search

Executive Summary

This article is adapted from a CiteWorks Studio case study about a job posting platform confronting a new kind of visibility problem: not just where it ranked, but how AI systems described it when employers went looking for answers.

As AI Overviews, ChatGPT, Gemini, and similar tools became part of the evaluation process, the brand had to improve more than search performance. It had to improve its citation architecture, AI visibility, and recommendation-stage presence.

- Employers were increasingly using AI-generated answers to compare job posting platforms before making a decision.

- The risk was no longer just weak rankings or scattered reputation signals, but which sources AI systems were using to frame the brand.

- Reported outcomes included a 71% increase in brand mentions in AI Overviews and 2,791 keywords ranking in Google’s top 10.

- The campaign also improved citation context across 100+ pages influencing AI-generated answers.

- The broader lesson is simple: brands now compete in two discovery systems at once, including traditional search and AI-generated recommendations.

For years, brands knew what visibility meant.

You ranked well, earned clicks, and stayed present when buyers searched for solutions. But that model is changing fast. In categories where trust matters, buyers are no longer relying on blue links alone.

They are asking AI tools to summarize options, compare vendors, and point them toward the most credible choice.

That shift created a new challenge for one job posting platform featured in the original CiteWorks Studio case study, Job Board AI Search Case Study

The issue was not just whether the brand appeared in search. It was whether AI systems were pulling from the right sources when employers asked who they should trust, compare, or consider next.

That is what makes this case worth reading. It shows how discovery changes when AI systems do not simply rank pages, but synthesize brand perception from forums, articles, comparison pages, and public discussion.

In that environment, visibility depends on what AI can find, trust, and repeat.

What Changed in This Market

The hiring market has become more complicated than a standard search funnel.

Employers still use Google, of course. But increasingly, they also use AI-generated answers to speed up research. Instead of reviewing ten tabs, they ask a tool to narrow the field for them. That sounds efficient. For brands, it also raises the stakes.

In hiring-related categories, trust concerns spread quickly. Discussions about fake listings, scams, poor candidate experiences, or unreliable platforms can surface publicly and stay visible for a long time.

Once AI systems begin pulling from those environments, a brand’s discovery problem becomes larger than rankings.

That is the core market shift this case captures. Platforms such as Google AI Overviews, Gemini, and ChatGPT do not just show a ranked list.

They synthesize from multiple sources, often leaning heavily on public discussion environments and high-authority community pages when forming recommendations.

That means a few prominent negative or outdated threads can shape what AI repeats, while more favorable but less visible content may barely register.

So the challenge here was not only reputation management in the traditional sense. It was citation architecture: the source environment influencing how AI systems described and compared the platform at the exact moment employers were deciding where to post jobs.

What the Brand Needed

The brand did not just need more traffic.

It needed a reliable way to understand whether it was becoming more visible inside AI-generated answers, and whether that visibility was improving against competitors. Traditional SEO reporting could not answer that on its own.

The platform needed a repeatable system to track AI share of voice, citation sources, and brand mentions across AI-generated answers. It needed to know:

- How often the brand appeared relative to competitors

- Which URLs and discussion sources were shaping AI responses

- How frequently the brand was named inside AI-generated answers

Those are different questions from “Where do we rank?” and they reflect a broader change in how discovery works now.

The company also needed more than a one-off experiment. It needed a measurable program that could be tracked over time and tied back to commercially relevant visibility gains.

What CiteWorks Studio Did

CiteWorks Studio began by mapping AI visibility and citation sources across the major AI platforms shaping discovery.

According to the case, reporting tracked citations and brand mentions across AI Overviews, ChatGPT, Gemini, AI Mode, Perplexity, and Copilot, with close attention to the domains and discussion environments most likely to influence recommendations in the category.

From there, the work became more selective and more strategic.

Instead of assuming every visibility effort would contribute equally, the program tracked month-over-month movement to see what was actually changing brand presence in AI answers. That made it possible to scale what produced measurable lift and pause what did not.

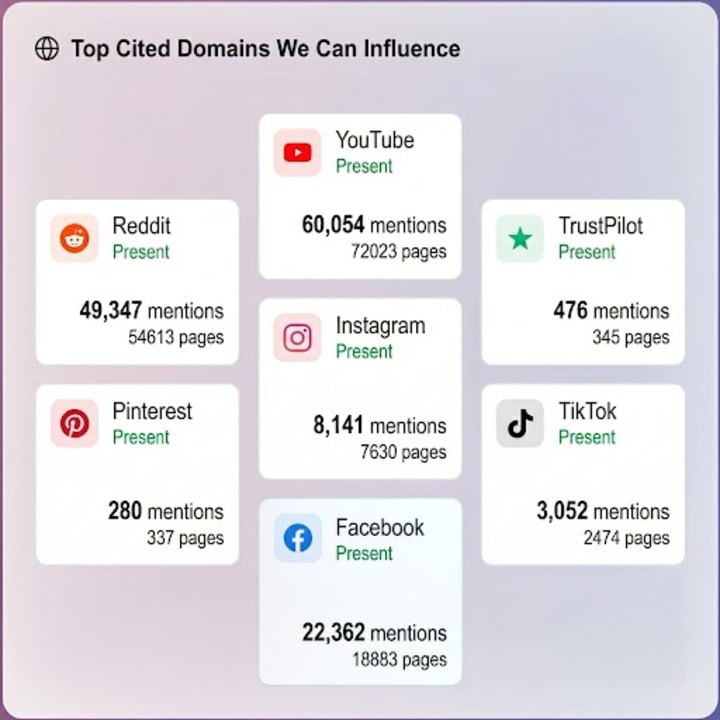

One of the most important strategic choices was focusing on the channels LLMs were already using. In this category, popular public forums were among the brand’s top cited domains.

So rather than defaulting to generic content production, the work emphasized stronger representation in high-intent public discussions that were already influencing AI-generated recommendations.

That distinction matters.

The goal was not simply to publish more. It was to improve the sources AI systems were already relying on when answering employment-related queries.

The VP of Marketing quote referenced in the case captures the shift clearly: the win was not only in rankings, but in what AI systems recommended when employers searched without knowing the brand name in advance.

That is recommendation-stage visibility, and it is becoming one of the most commercially important forms of discovery.

Results From the Campaign

The outcomes reported in the case span both traditional search visibility and AI-driven discovery.

These numbers matter on their own. But what makes them more interesting is what they suggest: stronger performance in search and stronger presence in the citation environments influencing AI-generated answers were moving together, not separately.

AI visibility is not just about whether a brand is named. It is also about where that name appears, what context surrounds it, and whether the sources being cited strengthen trust or weaken it.

There is more detail in the full case study, including additional campaign metrics, delivery context, and methodology notes that are worth reviewing directly.

One of the biggest headline figures in the case is the estimated $8,797,300.28 in total monthly branded value, including $4,629,347.18 in organic keyword value and $4,167,953.10 in LLM-cited pages value.

The source is careful about how that figure is presented, and that caution matters. It is framed as a directional estimate based on tracked keyword visibility, combined monthly search volume, and paid search benchmark value. It is not presented as an exact attribution.

That caveat should stay attached to the number. The figure is valuable as a model of visibility impact, not as a claim of directly booked revenue.

Why This Matters for Job Boards and Growth Teams

This case is really about a larger shift in how buyers form a shortlist.

A brand can perform reasonably well in search and still lose ground when recommendation-stage discovery begins. If AI systems are assembling answers from weak, negative, outdated, or incomplete sources, rankings alone will not protect the brand’s position.

That is why citation architecture matters so much now.

For job boards and other trust-sensitive platforms, buyers are often looking for a shortcut to confidence. They want reassurance that a vendor is credible, useful, and worth considering. Increasingly, they are asking AI systems to accelerate that judgment.

When that happens, the public web becomes more than a reputation layer. It becomes part of the recommendation engine.

The practical takeaway is not that SEO matters less. It is that SEO no longer tells the whole story. Brands now need visibility in both search results and AI-generated recommendations.

That means measuring not only rankings, but also where AI systems source their answers, how often the brand is mentioned, and whether that cited context helps or hurts decision-stage trust.

CiteWorks Studio can help you set up LLM visibility reporting that ties directly into marketing outcomes. Reach out if you want this done by experts.

Key Definitions

AI Visibility

AI visibility is how often and how clearly a brand appears inside AI-generated answers, summaries, and recommendations. It matters because buyers increasingly use AI systems to compare vendors before visiting a site, so being absent from those answers can mean losing consideration early.

Citation Architecture

Citation architecture is the network of sources that consistently shapes how AI systems talk about a brand or topic. It matters because AI tools often synthesize from recurring third-party sources, which means the quality, tone, and visibility of those sources can directly influence recommendation-stage perception.

AI Share of Voice

AI share of voice tracks how often a brand appears in AI-generated answers compared with competitors. It is commercially useful because it shows whether a company is present in the recommendation set for important category queries, not just whether its own pages rank in search.

Generative Engine Optimization

Generative engine optimization, or GEO, is the practice of improving the likelihood that AI systems use and cite a brand when generating answers. Unlike traditional SEO, which focuses on ranking pages, GEO focuses on the sources and signals AI tools retrieve, interpret, and combine.

About the Creator

Linda Villar

Data nerd turning complex metrics into compelling narratives. Exploring the 'how' and 'why' behind success stories.

Comments

There are no comments for this story

Be the first to respond and start the conversation.